What is the echo CHamber effect?

Some critics of online journalism are worried about a phenomenon called the “echo effect,” which describes an increasingly common situation where readers are only shown content that reinforces their current political or social views, without ever challenging them to think differently. The cause of the echo effect comes down to the machine learning algorithms employed by companies like Google and Facebook, which aim to serve their users content that is tailored to their interests. On the surface it may be difficult to see these goals as being anything but beneficial. Personalized algorithms allow users to find the information they want without sifting through pages of irrelevant content. The algorithms additionally allow companies to more accurately match users to ads that align with their preferences, improving their revenue stream.

However, the risk that these algorithms pose is that they effectively filter out search results that are not in accord with a user’s current viewpoint. For example, Eli Pariser, the author of "The Filter Bubble," shows in his TED talk how people with differing political views are shown radically different results for exactly the same Google search. In one study, Pariser asked two people to search for the term “Egypt” at exactly the same time. In his words:

“I asked a bunch of friends to Google "Egypt" and to send me screenshots of what they got… you don't even have to read the links to see how different these two pages are. But when you do read the links, it's really quite remarkable. Daniel didn't get anything about the protests in Egypt at all in his first page of Google results. Scott's results were full of them. And this was the big story of the day at that time. That's how different these results are becoming.”

In particular, this is troublesome because we are unable to tell when we are caught up in it—for example, there is no way to totally “uncustomize” a Google search. Further, Pariser warns this study is not an anomaly and that the echo effect is more even widespread than we would imagine. According to him,

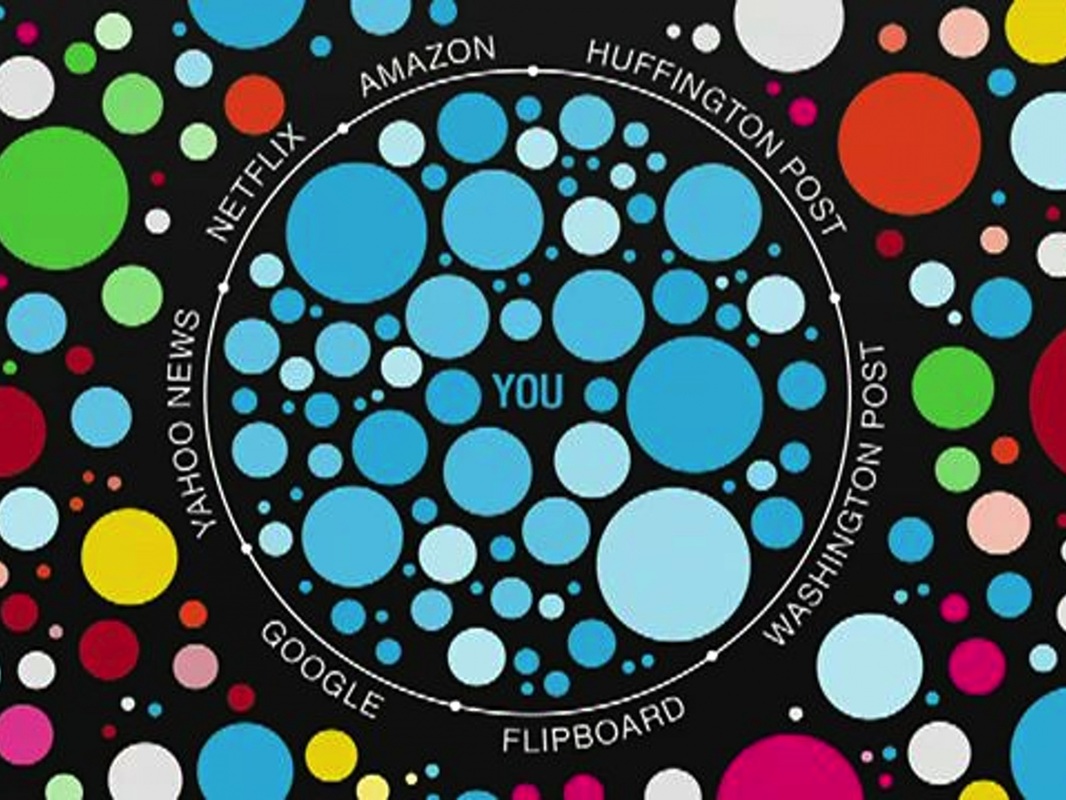

“It’s not just Google and Facebook either. This is something that's sweeping the Web. There are a whole host of companies that are doing this kind of personalization. Yahoo News, the biggest news site on the Internet, is now personalized--different people get different things. Huffington Post, the Washington Post, the New York Times—all flirting with personalization in various ways. And this moves us very quickly toward a world in which the Internet is showing us what it thinks we want to see, but not necessarily what we need to see.”

Even Eric Schmidt, the executive chairman of Google, acknowledges the troubling implications of unavoidably tailored search, saying "[soon] it will be very hard for people to watch or consume something that has not in some sense been tailored for them."

Pariser’s study showed that the echo chamber is clearly harmful to the public’s awareness of important issues, as it actually limits who can discover information about certain current events. Further, situations where people are only exposed to information that reinforces their current beliefs causes polarization, because individuals become more convinced of their correctness when they are not seeing a diverse set of expert opinions.

However, the risk that these algorithms pose is that they effectively filter out search results that are not in accord with a user’s current viewpoint. For example, Eli Pariser, the author of "The Filter Bubble," shows in his TED talk how people with differing political views are shown radically different results for exactly the same Google search. In one study, Pariser asked two people to search for the term “Egypt” at exactly the same time. In his words:

“I asked a bunch of friends to Google "Egypt" and to send me screenshots of what they got… you don't even have to read the links to see how different these two pages are. But when you do read the links, it's really quite remarkable. Daniel didn't get anything about the protests in Egypt at all in his first page of Google results. Scott's results were full of them. And this was the big story of the day at that time. That's how different these results are becoming.”

In particular, this is troublesome because we are unable to tell when we are caught up in it—for example, there is no way to totally “uncustomize” a Google search. Further, Pariser warns this study is not an anomaly and that the echo effect is more even widespread than we would imagine. According to him,

“It’s not just Google and Facebook either. This is something that's sweeping the Web. There are a whole host of companies that are doing this kind of personalization. Yahoo News, the biggest news site on the Internet, is now personalized--different people get different things. Huffington Post, the Washington Post, the New York Times—all flirting with personalization in various ways. And this moves us very quickly toward a world in which the Internet is showing us what it thinks we want to see, but not necessarily what we need to see.”

Even Eric Schmidt, the executive chairman of Google, acknowledges the troubling implications of unavoidably tailored search, saying "[soon] it will be very hard for people to watch or consume something that has not in some sense been tailored for them."

Pariser’s study showed that the echo chamber is clearly harmful to the public’s awareness of important issues, as it actually limits who can discover information about certain current events. Further, situations where people are only exposed to information that reinforces their current beliefs causes polarization, because individuals become more convinced of their correctness when they are not seeing a diverse set of expert opinions.

Why the echo chamber effect is not so bad

While the echo chamber effect is certainly troubling, it is not a new problem to the media industry. In the past, there have always been gatekeepers of information, specifically publication editors or new show hosts, who had the job of curating what content was appropriate for their audiences to see. Several studies have confirmed that, with some exceptional cases, user consumption of news information is mostly passive. In other words, viewers tend to subscribe to certain viewing or reading habits (such as FOX news or The Economist), which become comfortable to them, and inevitably shape the variety of opinionated content that they are exposed to. One such study noted that:

“Those viewers who can be counted on to watch a news program are not at all drawn to their set from their various pursuits by the appeal of the program; for the main part they are already watching television at that hour, or disposed to watch it then, according to the audience research studies that networks have conducted over the years.”

Personalization algorithms have changed this existing paradigm by replacing the human gatekeepers of information with machines. Now, it is not a human editor who chooses what stories will appear on your Yahoo News page, but a machine-learning algorithm. This difference actually gives us some cause for optimism; as such learning algorithms are most importantly based on a user’s Internet history. Thus, once a user begins searching for opinions outside of their observed preference bubble (by, perhaps, clicking on a link to a controversial story sent to them by a friend with differing beliefs), that user’s future search results inevitably expand. Sharing across social networks, in this way, has the potential to leverage personalized search to break down the echo chamber effect. The takeaway of this is that whereas a machine gatekeeper of information is able to adapt to changing user preferences (and even encourage these changes by providing some limited but dramatic variation in the content served), a human gatekeeper, such as a FOX news host, would not be able to, because they would be choosing content for a larger audience with a relatively non-interactively defined set of preferences. In short, the echo chamber effect is rooted in the longstanding problem of perpetuating user consumption habits rather than personalization algorithms. For example, Facebook dispelled claims that it was instigating the echo chamber with its news feed personalization algorithms, by running study, which concluded that habituated user behaviors, and not the algorithms, were to blame for the lack of story diversity in user’s pages.

“Those viewers who can be counted on to watch a news program are not at all drawn to their set from their various pursuits by the appeal of the program; for the main part they are already watching television at that hour, or disposed to watch it then, according to the audience research studies that networks have conducted over the years.”

Personalization algorithms have changed this existing paradigm by replacing the human gatekeepers of information with machines. Now, it is not a human editor who chooses what stories will appear on your Yahoo News page, but a machine-learning algorithm. This difference actually gives us some cause for optimism; as such learning algorithms are most importantly based on a user’s Internet history. Thus, once a user begins searching for opinions outside of their observed preference bubble (by, perhaps, clicking on a link to a controversial story sent to them by a friend with differing beliefs), that user’s future search results inevitably expand. Sharing across social networks, in this way, has the potential to leverage personalized search to break down the echo chamber effect. The takeaway of this is that whereas a machine gatekeeper of information is able to adapt to changing user preferences (and even encourage these changes by providing some limited but dramatic variation in the content served), a human gatekeeper, such as a FOX news host, would not be able to, because they would be choosing content for a larger audience with a relatively non-interactively defined set of preferences. In short, the echo chamber effect is rooted in the longstanding problem of perpetuating user consumption habits rather than personalization algorithms. For example, Facebook dispelled claims that it was instigating the echo chamber with its news feed personalization algorithms, by running study, which concluded that habituated user behaviors, and not the algorithms, were to blame for the lack of story diversity in user’s pages.